Microscopic 3D navigation system (Japanese patent application project)

This is a research and patent application project built around microsurgical navigation. The current page only discloses the technical route, uses and phased results at the project level, and does not disclose the full text of unpublished Japanese patent application documents.

Project overview

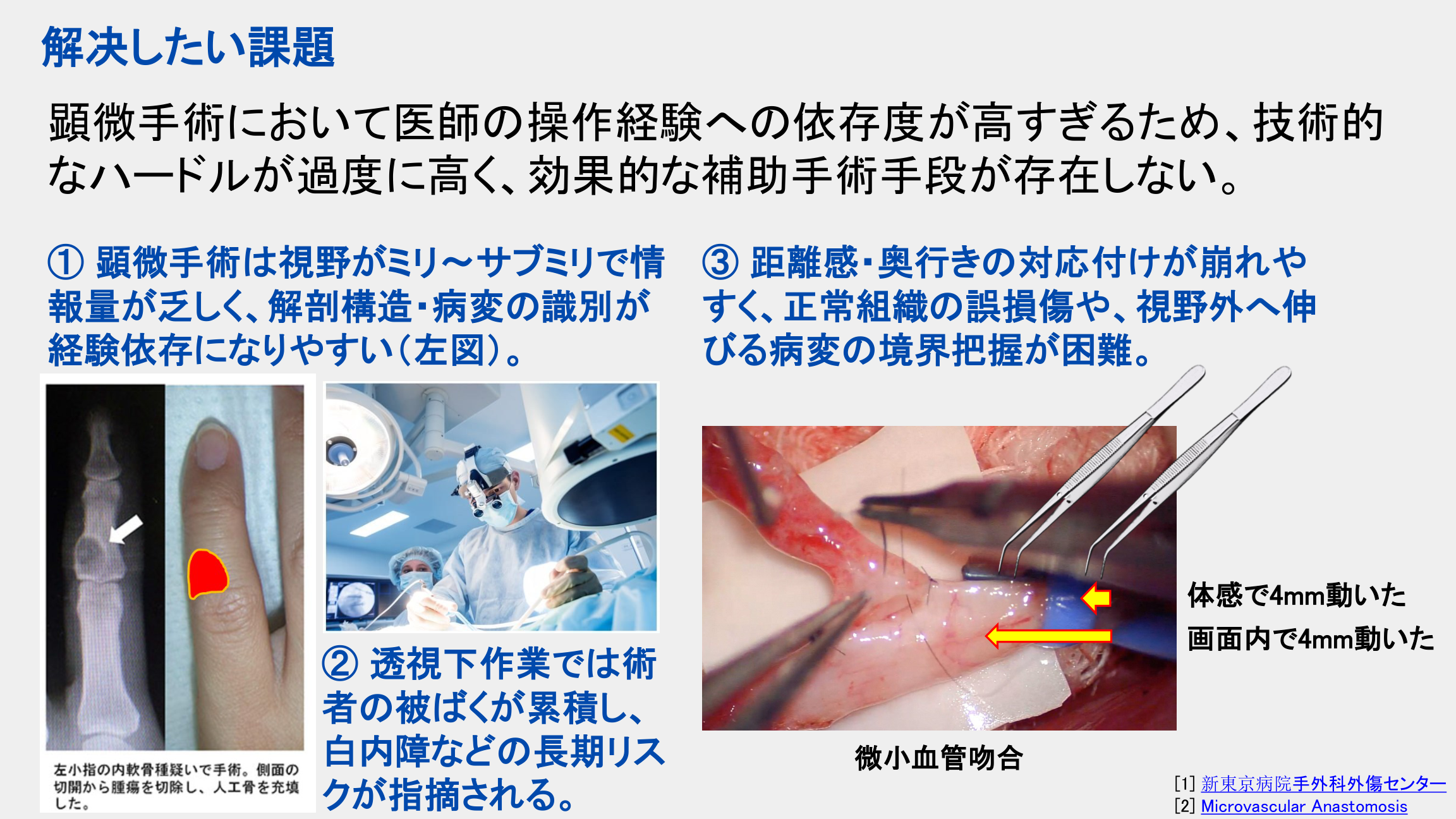

This project attempts to solve a long-standing core pain point in microsurgery: surgeons are highly dependent on personal experience in a very small field of view. It is difficult to stably identify anatomical structures and lesion boundaries, and it is difficult to continuously establish a three-dimensional sense of space in a limited field of view.

Compared with traditional intraoperative navigation systems, this solution does not directly shrink the large marking frame or independent tracking system, but redesigns the navigation architecture suitable for micro-operation scenarios, integrating visible light images, near-infrared structural information and surgical tool tracking information into the same field of view.

Three core technical routes

- Spectroscopic stereoscopic vision: Different wavelengths are used to perform different functions. Visible light is responsible for display and AI analysis, and near-infrared is responsible for structured illumination and tool tracking at the same time, thus taking into account both “clear vision” and “positioning” in the same imaging system.

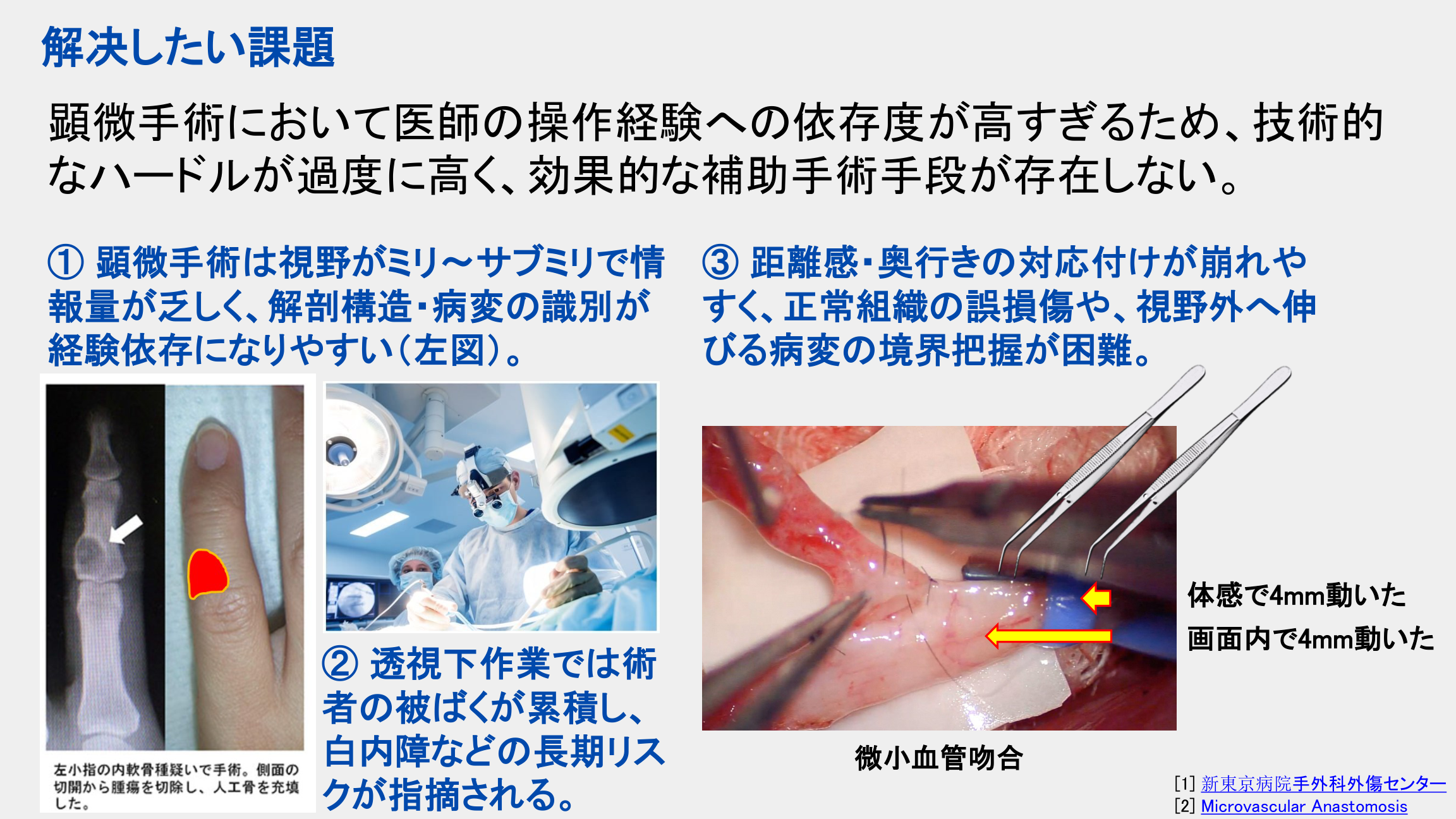

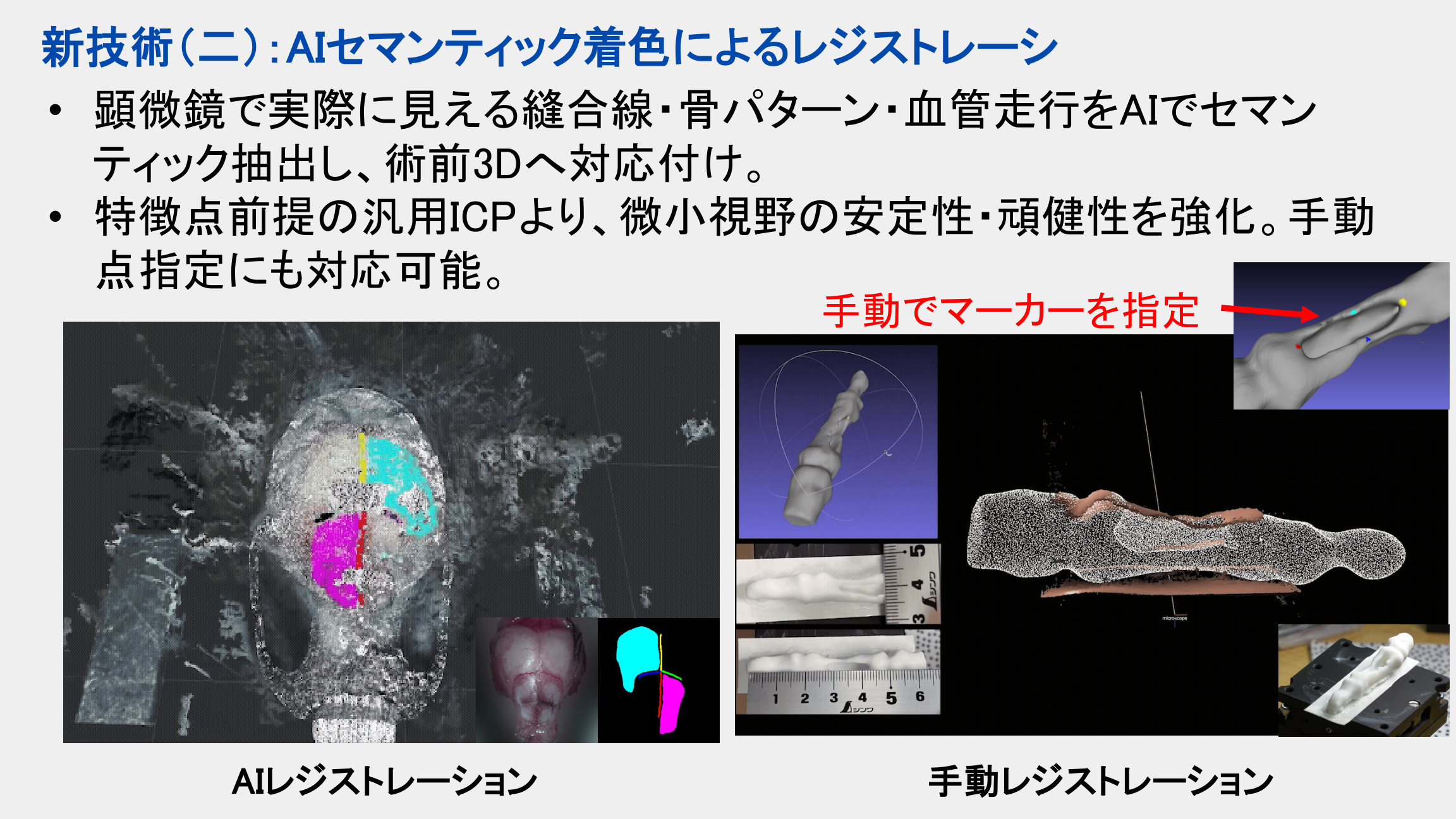

- AI semantic registration: Extract semantic features from sutures, bone surface textures or blood vessel courses that are actually visible under the microscope, and map them to the preoperative three-dimensional model to improve stability and robustness under small fields of view.

- Fiber-optic tool tracking: Embedding ultra-thin optical fibers into surgical tools allows the tips of surgical tools to be tracked with high signal-to-noise ratio in the microscopic operating field, avoiding the problems of traditional large-size markers blocking the field of view or being difficult to place in delicate scenes.

Why this route makes sense

- It attempts to put the tool tracking capabilities of the traditional Type A navigation system and the real scene visualization capabilities of the Type B system into the same microscopic scene solution.

- It is oriented to the fine operation scales that are most difficult to cover with traditional navigation systems, rather than conventional open surgery or ordinary instrument scales.

- It takes into account clinical navigation, research platform and skill assessment scenarios at the same time, so it has both potential translational value and experimental system value.

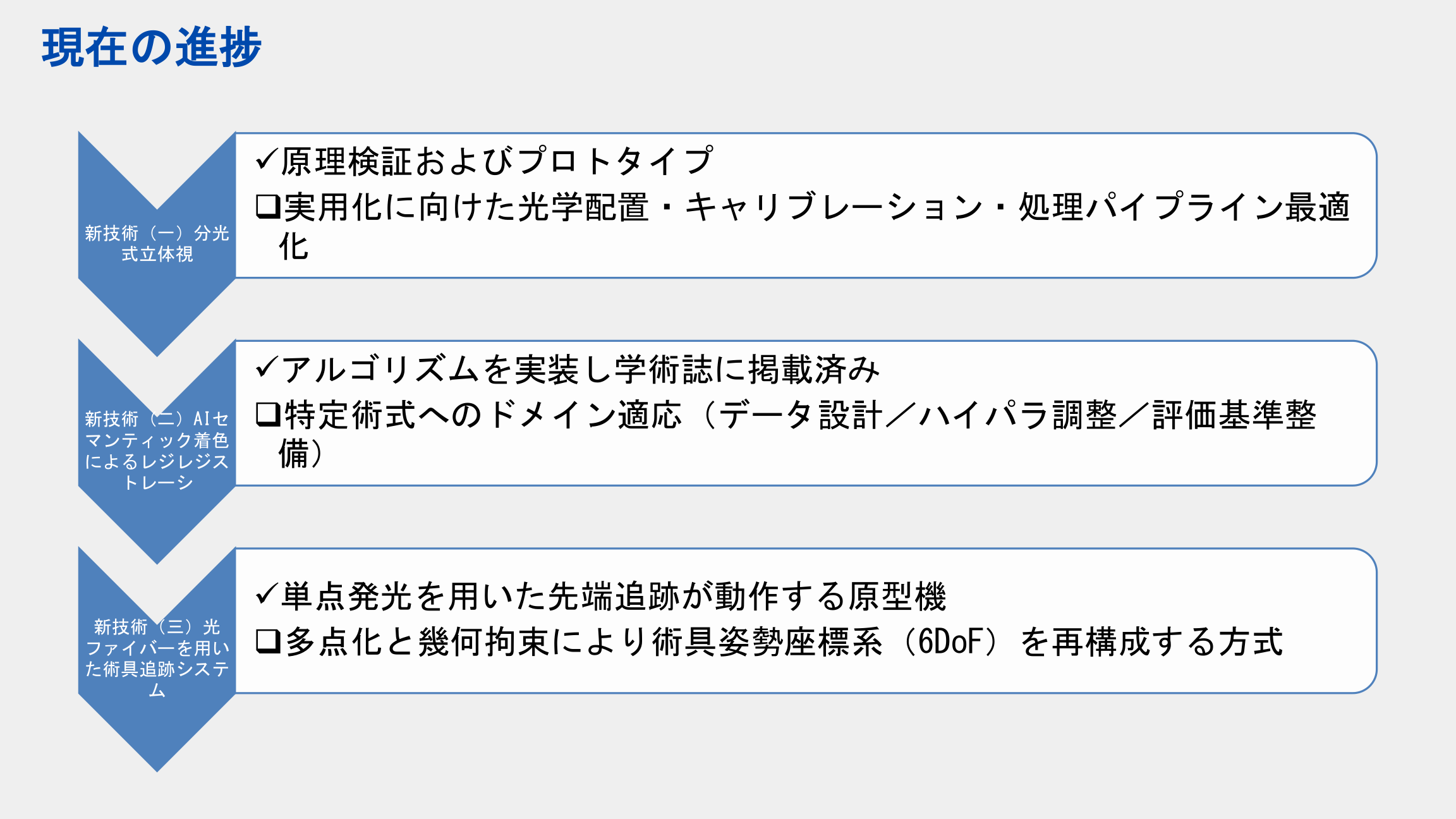

Current progress

- The principle verification and prototype of the spectroscopic stereoscopic vision direction have been completed. The next step will focus on optical configuration, calibration and processing process optimization.

- The algorithm implementation of the AI registration direction has been completed and the paper results have been formed. In the future, surgical adaptation, data design and evaluation criteria will be continued.

- The fiber optic tool tracking direction has completed a single-point lighting prototype, and the next stage will be further expanded to multi-point constraints and more complete posture reconstruction.

Explanation of public boundaries

The Japanese patent application corresponding to this project has not yet been published, so the application acceptance documents or application text downloads are not provided here, nor are the serial numbers and complete claims in the unpublished applications disclosed. The current page only organizes project-level descriptions and supplementary slides that are safe for public display.

Supplementary illustration

Leave a Reply